How to bring zero-trust security to microservices

Transitioning to microservices has numerous positive aspects for teams building significant apps, specially people that will have to accelerate the speed of innovation, deployments, and time to market. Microservices also give technologies teams the possibility to safe their apps and expert services better than they did with monolithic code bases.

Zero-rely on safety offers these teams with a scalable way to make safety idiot-proof while managing a expanding selection of microservices and better complexity. That’s ideal. Though it appears counterintuitive at 1st, microservices enable us to safe our apps and all of their expert services better than we ever did with monolithic code bases. Failure to seize that possibility will end result in non-safe, exploitable, and non-compliant architectures that are only likely to become more challenging to safe in the foreseeable future.

Let’s realize why we need to have zero-rely on safety in microservices. We will also evaluation a actual-world zero-rely on safety example by leveraging the Cloud Indigenous Computing Foundation’s Kuma challenge, a common services mesh built on top of the Envoy proxy.

Protection in advance of microservices

In a monolithic software, just about every useful resource that we build can be accessed indiscriminately from just about every other useful resource by means of perform calls since they are all element of the identical code base. Normally, methods are likely to be encapsulated into objects (if we use OOP) that will expose initializers and capabilities that we can invoke to interact with them and transform their state.

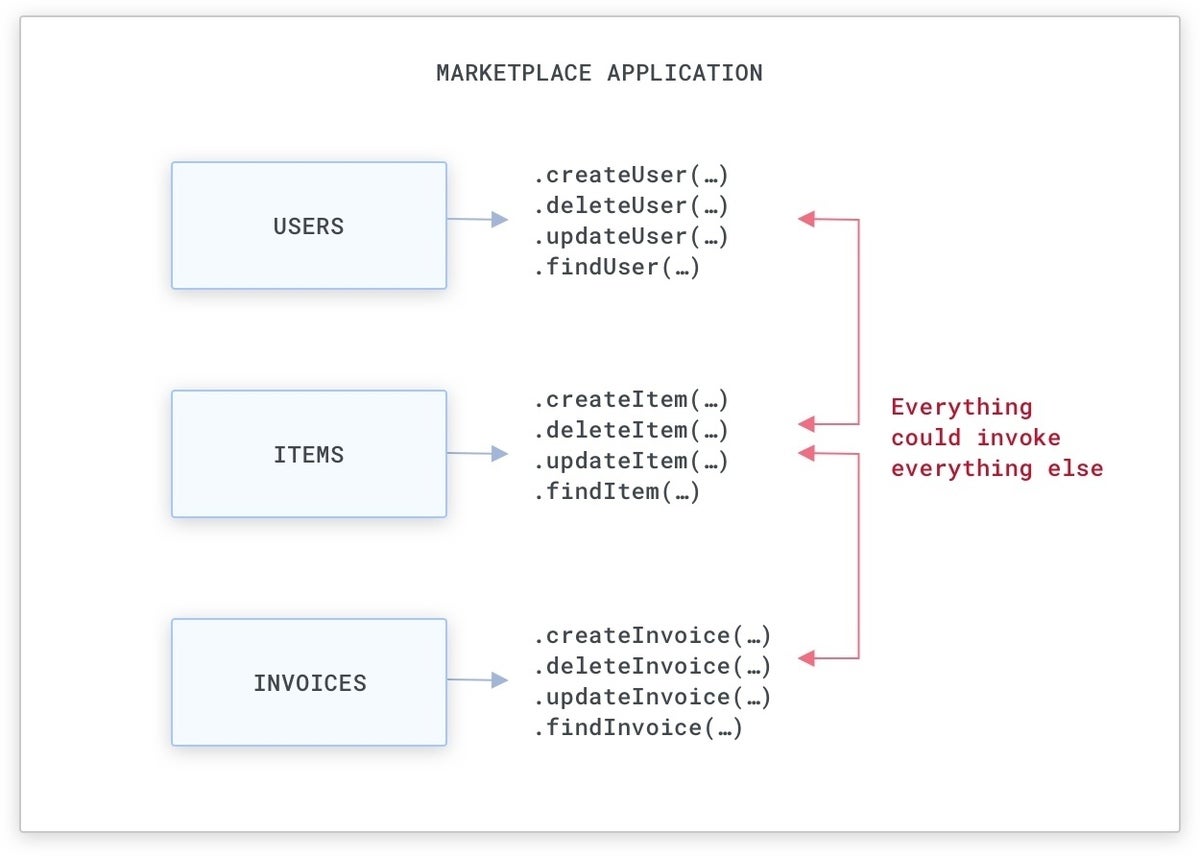

For example, if we are building a market software (like Amazon.com), there will be methods that detect people and the objects for sale, and that produce invoices when objects are offered:

A simple market monolithic software.

Normally, this usually means we will have objects that we can use to both build, delete, or update these methods by means of perform calls that can be utilised from everywhere in the monolithic code base. Even though there are approaches to lessen entry to specific objects and capabilities (i.e., with general public, private, and guarded entry-amount modifiers and package-amount visibility), commonly these techniques are not strictly enforced by teams, and our safety should really not rely on them.

Kong

KongA monolithic code base is easy to exploit, since methods can be probably accessed by everywhere in the code base.

Protection with microservices

With microservices, as an alternative of possessing just about every useful resource in the identical code base, we will have people methods decoupled and assigned to individual expert services, with just about every services exposing an API that can be utilised by yet another services. As an alternative of executing a perform call to entry or transform the state of a useful resource, we can execute a community request.

Kong

KongWith microservices our methods can interact with just about every other by means of services requests above the community as opposed to perform calls in the identical monolithic code base. The APIs can be RPC-based mostly, Rest, or anything at all else really.

By default, this does not transform our problem: Without the need of appropriate barriers in put, just about every services could theoretically eat the uncovered APIs of yet another services to transform the state of just about every useful resource. But since the conversation medium has modified and it is now the community, we can use technologies and styles that run on the community connectivity by itself to set up our barriers and figure out the entry degrees that just about every services should really have in the massive picture.

Comprehension zero-rely on safety

To employ safety procedures above the community connectivity between expert services, we need to have to set up permissions, and then check out people permissions on just about every incoming request.

For example, we may want to enable the “Invoices” and “Users” expert services to eat just about every other (an bill is often linked with a person, and a person can have numerous invoices), but only enable the “Invoices” services to eat the “Items” services (given that an bill is often linked to an product), like in the subsequent circumstance:

Kong

KongA graphical illustration of connectivity permissions in between expert services. The arrows and their direction figure out whether expert services can make requests (environmentally friendly) or not (crimson). For example, the Merchandise services simply cannot eat any other services, but it can be eaten by the Invoices services.

Right after location up permissions (we will discover shortly how a services mesh can be utilised to do this), we then need to have to check out them. The element that will check out our permissions will have to figure out if the incoming requests are becoming despatched by a services that has been permitted to eat the existing services. We will employ a check out somewhere alongside the execution route, some thing like this:

if (incoming_services == “items”)

deny()

else

enable()

This check out can be finished by our expert services them selves or by anything at all else on the execution route of the requests, but eventually it has to take place somewhere.

The major dilemma to address in advance of implementing these permissions is possessing a responsible way to assign an id to just about every services so that when we detect the expert services in our checks, they are who they declare to be.

Identification is critical. Without the need of id, there is no safety. Whenever we journey and enter a new region, we exhibit a passport that associates our persona with the document, and by executing so, we certify our id. Also, our expert services also will have to present a “virtual passport” that validates their identities.

Due to the fact the notion of rely on is exploitable, we will have to eliminate all forms of rely on from our systems—and hence, we will have to employ “zero-trust” safety.

Kong

KongThe id of the caller is despatched on just about every request by means of mTLS.

In order for zero-rely on to be applied, we will have to assign an id to just about every services occasion that will be utilised for just about every outgoing request. The id will act as the “virtual passport” for that request, confirming that the originating services is without a doubt who they declare to be. mTLS (Mutual transport Layer Protection) can be adopted to give both of those identities and encryption on the transport layer. Due to the fact just about every request now offers an id that can be confirmed, we can then implement the permissions checks.

The id of a services is typically assigned as a SAN (Issue Choice Title) of the originating TLS certificate linked with the request, as in the situation of zero-rely on safety enabled by a Kuma services mesh, which we will discover shortly.

SAN is an extension to X.509 (a conventional that is becoming utilised to build general public crucial certificates) that lets us to assign a personalized benefit to a certificate. In the situation of zero-rely on, the services identify will be a single of people values that is passed alongside with the certificate in a SAN industry. When a request is becoming been given by a services, we can then extract the SAN from the TLS certificate—and the services identify from it, which is the id of the service—and then employ the permission checks recognizing that the originating services really is who it promises to be.

Kong

KongThe SAN (Issue Choice Title) is incredibly usually utilised in TLS certificates and can also be explored by our browser. In the picture higher than, we can see some of the SAN values belonging to the TLS certificate for Google.com.

Now that we have explored the great importance of possessing identities for our expert services and we realize how we can leverage mTLS as the “virtual passport” that is integrated in just about every request our expert services make, we are even now left with numerous open subjects that we need to have to address:

- Assigning TLS certificates and identities on just about every occasion of just about every services.

- Validating the identities and checking permissions on just about every request.

- Rotating certificates above time to make improvements to safety and prevent impersonation.

These are incredibly difficult challenges to address since they successfully give the backbone of our zero-rely on safety implementation. If not finished effectively, our zero-rely on safety model will be flawed, and therefore insecure.

What’s more, the higher than tasks will have to be applied for just about every occasion of just about every services that our software teams are creating. In a typical business, these services occasions will include both of those containerized and VM-based mostly workloads running across a single or more cloud suppliers, maybe even in our physical datacenter.

The major error any business could make is inquiring its teams to make these functions from scratch just about every time they build a new software. The resulting fragmentation in the safety implementations will build unreliability in how the safety model is applied, producing the whole system insecure.

Company mesh to the rescue

Company mesh is a pattern that implements fashionable services connectivity functionalities in this sort of a way that does not have to have us to update our apps to consider benefit of them. Company mesh is typically delivered by deploying data airplane proxies following to just about every occasion (or Pod) of our expert services and a handle airplane that is the source of real truth for configuring people data airplane proxies.

Kong

KongIn a services mesh, all the outgoing and incoming requests are automatically intercepted by the data airplane proxies (Envoy) that are deployed following to just about every occasion of just about every services. The handle airplane (Kuma) is in demand of propagating the guidelines we want to set up (like zero-rely on) to the proxies. The handle airplane is hardly ever on the execution route of the services-to-services requests only the data airplane proxies dwell on the execution route.

The services mesh pattern is based mostly on the concept that our expert services should really not be in demand of managing the inbound or outbound connectivity. Around time, expert services composed in unique technologies will inevitably stop up possessing many implementations. Therefore, a fragmented way to handle that connectivity eventually will end result in unreliability. Additionally, the software teams should really focus on the software by itself, not on managing connectivity given that that should really ideally be provisioned by the underlying infrastructure. For these good reasons, services mesh not only provides us all kinds of services connectivity functionality out of the box, like zero-rely on safety, but also tends to make the software teams more efficient while offering the infrastructure architects entire handle above the connectivity that is becoming produced in the business.

Just as we did not talk to our software teams to stroll into a physical data center and manually join the networking cables to a router/swap for L1-L3 connectivity, these days we really don’t want them to make their individual community management software for L4-L7 connectivity. As an alternative, we want to use styles like services mesh to give that to them out of the box.

Zero-rely on safety by means of Kuma

Kuma is an open source services mesh (1st created by Kong and then donated to the CNCF) that supports multi-cluster, multi-location, and multi-cloud deployments across both of those Kuberenetes and digital machines (VMs). Kuma offers more than 10 guidelines that we can utilize to services connectivity (like zero-rely on, routing, fault injection, discovery, multi-mesh, and so forth.) and has been engineered to scale in significant dispersed enterprise deployments. Kuma natively supports the Envoy proxy as its data airplane proxy technologies. Relieve of use has been a focus of the challenge given that working day a single.

Kong

KongKuma can operate a dispersed services mesh across clouds and clusters — including hybrid Kubernetes plus VMs — by means of its multi-zone deployment mode.

With Kuma, we can deploy a services mesh that can produce zero-rely on safety across both of those containerized and VM workloads in a one or a number of cluster set up. To do so, we need to have to abide by these techniques:

one. Obtain and install Kuma at kuma.io/install.

two. Start our expert services and commence `kuma-dp` following to them (in Kubernetes, `kuma-dp` is automatically injected). We can abide by the acquiring began directions on the installation webpage to do this for both of those Kubernetes and VMs.

Then, after our handle airplane is running and the data airplane proxies are properly connecting to it from just about every occasion of our expert services, we can execute the ultimate phase:

3. Allow the mTLS and Site visitors Authorization guidelines on our services mesh by means of the Mesh and TrafficPermission Kuma methods.

In Kuma, we can build a number of isolated digital meshes on top of the identical deployment of services mesh, which is typically utilised to guidance a number of apps and teams on the identical services mesh infrastructure. To empower zero-rely on safety, we 1st need to have to empower mTLS on the Mesh useful resource of preference by enabling the mtls home.

In Kuma, we can make a decision to permit the system produce its individual certificate authority (CA) for the Mesh or we can set our individual root certificate and keys. The CA certificate and crucial will then be utilised to automatically provision a new TLS certificate for just about every data airplane proxy with an id, and it will also automatically rotate people certificates with a configurable interval of time. In Kong Mesh, we can also talk to a 3rd-get together PKI (like HashiCorp Vault) to provision a CA in Kuma.

For example, on Kubernetes, we can empower a builtin certificate authority on the default mesh by applying the subsequent useful resource by means of kubectl (on VMs, we can use Kuma’s CLI kumactl):

apiVersion: kuma.io/v1alpha1

kind: Mesh

metadata:

identify: default

spec:

mtls:

enabledBackend: ca-one

backends:

- identify: ca-one

form: builtin

dpCert:

rotation:

expiration: 1d

conf:

caCert:

RSAbits: 2048

expiration: 10y

Kong

Kong