Driving in the Snow is a Team Effort for AI Sensors

No one likes driving in a blizzard, such as autonomous motor vehicles. To make self-driving

autos safer on snowy streets, engineers appear at the difficulty from the car’s place of look at.

A significant obstacle for entirely autonomous motor vehicles is navigating negative weather conditions. Snow primarily

confounds critical sensor information that helps a automobile gauge depth, uncover road blocks and

keep on the right aspect of the yellow line, assuming it is visible. Averaging additional

than two hundred inches of snow each individual winter, Michigan’s Keweenaw Peninsula is the best

position to press autonomous automobile tech to its limits. In two papers offered at SPIE Defense + Professional Sensing 2021, researchers from Michigan Technological University discuss solutions for snowy driving scenarios that could aid provide self-driving solutions to snowy towns like Chicago, Detroit,

Minneapolis and Toronto.

Just like the weather conditions at periods, autonomy is not a sunny or snowy indeed-no designation.

Autonomous motor vehicles protect a spectrum of stages, from autos previously on the marketplace with blind spot warnings or braking support,

to motor vehicles that can change in and out of self-driving modes, to others that can navigate

entirely on their very own. Big automakers and research universities are nonetheless tweaking

self-driving technological know-how and algorithms. From time to time accidents occur, possibly because of to

a misjudgment by the car’s artificial intelligence (AI) or a human driver’s misuse

of self-driving features.

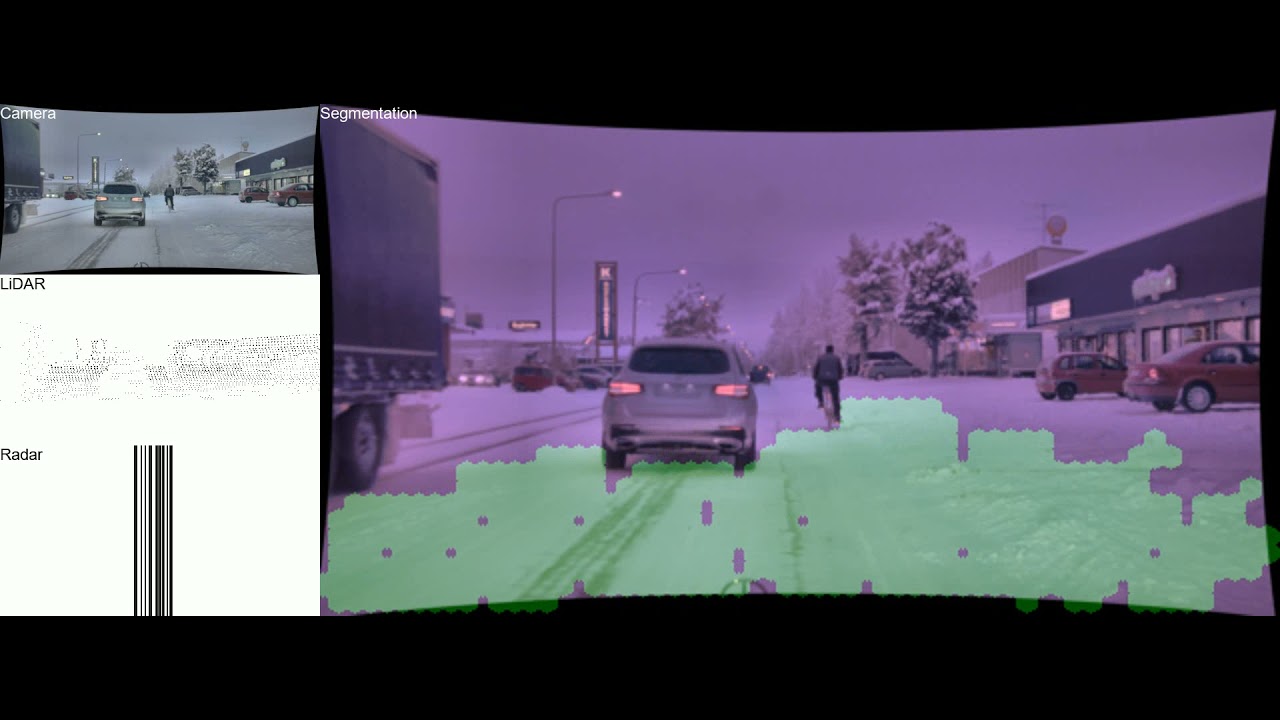

Play Drivable route detection using CNN sensor fusion for autonomous driving in the snow movie

Drivable route detection using CNN sensor fusion for autonomous driving in the snow

A companion movie to the SPIE research from Rawashdeh’s lab exhibits how the artificial

intelligence (AI) network segments the image region into drivable (eco-friendly) and non-drivable.

The AI procedures — and fuses — every single sensor’s information even with the snowy streets and seemingly

random tire tracks, although also accounting for crossing and oncoming website traffic.

Sensor Fusion

Humans have sensors, also: our scanning eyes, our sense of balance and movement, and

the processing electricity of our brain aid us recognize our setting. These seemingly

essential inputs allow us to drive in almost each individual state of affairs, even if it is new to us,

mainly because human brains are excellent at generalizing novel activities. In autonomous motor vehicles,

two cameras mounted on gimbals scan and perceive depth using stereo vision to mimic

human vision, although balance and movement can be gauged using an inertial measurement

device. But, computers can only respond to scenarios they have encountered prior to or been

programmed to identify.

Given that artificial brains are not around but, process-specific AI algorithms must just take the

wheel — which suggests autonomous motor vehicles must depend on many sensors. Fisheye cameras

widen the look at although other cameras act considerably like the human eye. Infrared picks up

heat signatures. Radar can see by the fog and rain. Light detection and ranging

(lidar) pierces by the dim and weaves a neon tapestry of laser beam threads.

“Every sensor has restrictions, and each individual sensor handles another one’s again,” said Nathir Rawashdeh, assistant professor of computing in Michigan Tech’s Faculty of Computing and 1 of the study’s guide researchers. He is effective on bringing the sensors’ information collectively

by an AI course of action identified as sensor fusion.

“Sensor fusion takes advantage of many sensors of different modalities to recognize a scene,”

he said. “You simply cannot exhaustively method for each individual depth when the inputs have challenging

designs. Which is why we will need AI.”

Rawashdeh’s Michigan Tech collaborators involve Nader Abu-Alrub, his doctoral university student

in electrical and personal computer engineering, and Jeremy Bos, assistant professor of electrical and personal computer engineering, together with master’s

degree learners and graduates from Bos’s lab: Akhil Kurup, Derek Chopp and Zach Jeffries.

Bos describes that lidar, infrared and other sensors on their very own are like the hammer

in an outdated adage. “‘To a hammer, all the things looks like a nail,’” quoted Bos. “Well,

if you have a screwdriver and a rivet gun, then you have additional solutions.”

Snow, Deer and Elephants

Most autonomous sensors and self-driving algorithms are getting formulated in sunny,

very clear landscapes. Recognizing that the relaxation of the globe is not like Arizona or southern

California, Bos’s lab started gathering regional information in a Michigan Tech autonomous automobile

(securely pushed by a human) during weighty snowfall. Rawashdeh’s team, notably Abu-Alrub,

poured above additional than 1,000 frames of lidar, radar and image information from snowy streets

in Germany and Norway to start training their AI method what snow looks like and

how to see previous it.

“All snow is not designed equal,” Bos said, pointing out that the wide variety of snow would make

sensor detection a obstacle. Rawashdeh added that pre-processing the information and ensuring

precise labeling is an crucial move to ensure precision and security: “AI is like

a chef — if you have excellent substances, there will be an superb food,” he said.

“Give the AI discovering network filthy sensor information and you will get a negative outcome.”

Minimal-high-quality information is 1 difficulty and so is actual dirt. Much like road grime, snow

buildup on the sensors is a solvable but bothersome problem. At the time the look at is very clear,

autonomous automobile sensors are nonetheless not often in settlement about detecting road blocks.

Bos mentioned a good illustration of exploring a deer although cleaning up locally collected

information. Lidar said that blob was nothing at all (30{d11068cee6a5c14bc1230e191cd2ec553067ecb641ed9b4e647acef6cc316fdd} opportunity of an obstacle), the camera saw

it like a sleepy human at the wheel (50{d11068cee6a5c14bc1230e191cd2ec553067ecb641ed9b4e647acef6cc316fdd} opportunity), and the infrared sensor shouted

WHOA (ninety{d11068cee6a5c14bc1230e191cd2ec553067ecb641ed9b4e647acef6cc316fdd} confident that is a deer).

Obtaining the sensors and their chance assessments to chat and master from every single other is

like the Indian parable of three blind men who uncover an elephant: every single touches a different

component of the elephant — the creature’s ear, trunk and leg — and arrives to a different

summary about what type of animal it is. Utilizing sensor fusion, Rawashdeh and Bos

want autonomous sensors to collectively figure out the response — be it elephant, deer

or snowbank. As Bos places it, “Rather than strictly voting, by using sensor fusion

we will appear up with a new estimate.”

Though navigating a Keweenaw blizzard is a approaches out for autonomous motor vehicles, their

sensors can get far better at discovering about negative weather conditions and, with innovations like sensor

fusion, will be ready to drive securely on snowy streets 1 day.

Michigan Technological University is a general public research university, dwelling to additional than

7,000 learners from 54 nations around the world. Established in 1885, the University delivers additional than

a hundred and twenty undergraduate and graduate degree packages in science and technological know-how, engineering,

forestry, business enterprise and economics, well being professions, humanities, arithmetic, and

social sciences. Our campus in Michigan’s Higher Peninsula overlooks the Keweenaw Waterway

and is just a few miles from Lake Excellent.